Rarely is a book’s title, subbed How Institutions Decay and Economies Die, so well-suited for its own description. Let me start by noting I agree with Niall Ferguson’s principal charge; that is institutions more than geography and culture explain global economic disparity, and their decay – especially in the West – should be of concern. At least more than it is right now. Also, despite the fact that he seems to know almost nothing about monetary policy, I found The Ascent of Money to be invaluable. Never, however, have I read a book – no less from an endowed chair at Harvard – that is so blatant in its fraudulent claim, only vindicated by a lawyerly interpretation of grammar: something, as any reader of the book knows, Ferguson does not like.

This book is clearly written for a lay audience, given its understandably pedantic explanation of what exactly an “institution” is (no, friends, not a mental asylum reminds us Ferguson). Therefore, it is fair to assume Ferguson does not expect his audience to have read the papers cited throughout. It is his responsibility as a member of elite academia to represent those papers with honesty and scholarship. As page 100 of the Allen Lane copy suggests, Ferguson does not agree:

It is startling to find how poorly the United States now fares when judged by these criteria [relating to the ease of doing business]. In a 2011 survey, [Michael] Porter [of Harvard] and his colleagues asked HBS alumni about 607 instances of decisions on whether or not to offshore operations. The United States retained the business in just ninety-six cases (16 per cent) and lost it in all the rest. Asked why they favoured foreign locations, the respondents listed the areas where they saw the US falling further behind the rest of the world. The top ten reasons included:

1. the effectiveness of the political system;

2. the complexity of the tax code;

3. regulation;

4. the efficiency of the legal framework;

5. the flexibility in hiring and firing.

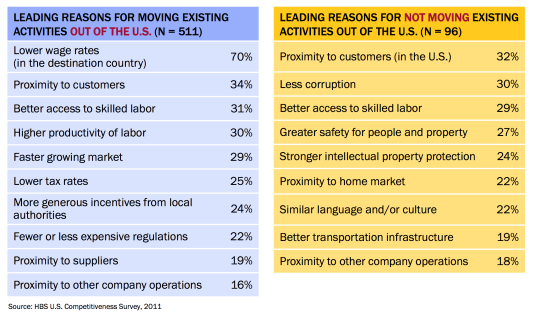

As it happens, I have read this paper. And, like I said, the only way Ferguson’s cherry-picked nonsense can be justified is through the emphasized grammar. Indeed the “top ten” reasons did (in different words) include the above. Take a look for yourself:

The reader is led to believe that the five listed reasons are at or near the top of a such list created by Porter and his team. To the contrary, the biggest reason, almost twice as prevalent as anything else, was “Lower wage rates (in the destination country)”. That would seem to suggest that “globalization” and “technology” play a role, which Ferguson shrugs off as irrelevant. The tax system, is sixth down the list and cannot in any way be considered primary. There is a chance that Ferguson was using another, more defensible, graph in defense of his claim:

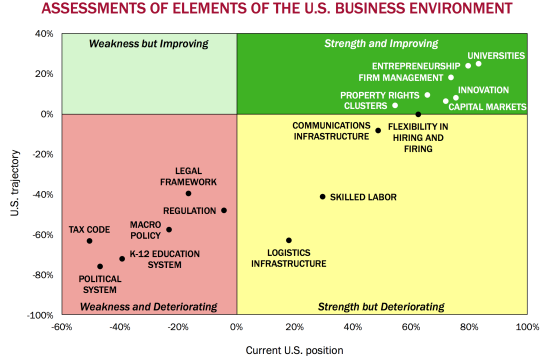

However, this division is not directly emergent from the study of HBS alumni themselves, as Ferguson suggests, but from later analysis. These are not “responses” at all. Furthermore, even though higher skill and education of foreign labor were core components of offshoring, Ferguson does not cite from the above graph America’s lagging “K-12 education system”. Or take the “flexibility in hiring and firing” which, from this graph, isn’t definitively a weakness or deteriorating at all. (Edit: if it is this graph, “flexibility in hiring and firing” is still a very clear strength – and looks stable. So it would be purely dishonest to list it as a reason for decay. It’s like cherry-picking cherries from a apple orchid.) He even fails to cite “logistics infrastructure” and “skilled labor”, whose deterioration leads the authors to note “investments in public goods crucial to competitiveness came under increasing pressure” at all. They do not gel with his hypothesis that “state is the enemy of society”.

What of Reinhart and Rogoff’s famous report, “Growth In a Time of Debt”? This study has much documented methodological and computational errors, but even before these were furiously publicized in the past three months – in academic circles to which Ferguson supposedly belongs – the results were highly disputed. Even the authors, explicitly at least, warned of confusing correlation with causation. But Niall Ferguson notes “Carmen and Vincent Reinhart and Ken Rogoff show that debt overhangs were associated with lower growth”. “Associated” with? Okay, cool. But then he, gleefully, argues against deficits “because the debt burden lowers growth”. And here I thought they teach historians about correlation and causation at Oxford. Also, with gay abandon, he ignores R and R’s consistent claim that inflation is a necessary tool today, arguing instead that higher inflation will lead to no good, signing his name on letters warning of hyperinflation.

Already, there is a pattern of citing microscopic elements of large, nuanced (in the former case), or debunked (in the latter case) studies without providing the appropriate context, or indeed the truth at all. Again, grammar – and that alone – can vindicate Ferguson’s convoluted logic.

The confusion doesn’t end with misrepresentation of facts and opinions, however. He even misrepresents the legends. Most educated but lay readers know very little of Adam Smith, only something about the free market and the famously invisible hand of the price mechanism. So they can be easily lied to about his beliefs. He inaugurates his book with a tribute to Smith via his writings on “the Stationary State” – (the “West” today) noting:

I defy the Western reader not to feel an uneasy sense of recognition in contemplating these two passages.

He pastes Smith’s lucid argument that standard of living of labor are high only in the “progressive” state, “hard” in the “stationary state” and “miserable” in the “declining state”.

This Smithian motif continues throughout the book. What readers are not told is that Adam Smith believed all growth was eventually stationary. Famous phrases like “the division of labor is limited by the extent of the market” aren’t catchy for nothing. He believed as wages increased, so too would the population, pushing them down, continuing in perpetual negative feedback into a steady-state population.

Adam Smith did believe that deregulation and free trade – contrary to mercantalist principles of the day – would increase that maximum steady state. But he, like so many classical economists around him, did not believe in the power of human ingenuity to lift human living conditions. Permanently. Indeed, he certainly did not nuance his argument through modern prescriptions of “institutional change” as forwarded by Acemoglu and Robins as caveats thereof, as Ferguson tries to have you believe.

And if the misrepresentation of philosophers (sorry, Professor Ferguson, but John Stuart Mill bordered on socialist), economists, facts, and opinions is not enough; perhaps the sheer internal inconsistency of the whole thing is. He casually notes:

Nor can we explain the great divergence [between the West and the Rest] in terms of imperialism; the other civilizations did plenty of that before Europeans began crossing oceans and conquering.

On the next paragraph, I kid you not, he cites “the ghost acres” of enslaved Caribbean farmers,

which were soon providing the peoples of the Atlantic metropoles with abundant sugar, a compact source of calories unavailable to most Asians.

SUCKERS! He also argues that the massive buildup of British debt “was a benign development”. But there was one big difference between them, and us. They were imperialists:

Though the national debt grew enormously in the course of England’s many wars with France, reaching a peak of more than 260 per cent of GDP in the decade after 1815, this leverage earned a handsome return, because on the other side of the balance sheet, acquired largely with a debt-financed navy, was a global empire […] There was no default. There was no inflation. And Britannia bestrode the globe.

Alright, folks, so this is what tenured professors at the world’s by and far most prestigious university want you to know: debt is okay so long as it finances a navy and enslaves other people on whom you may force a market. Or if it fuels a war (throughout the book Ferguson talks about “peacetime” debt, as if using your credit card to kill and maim is chill). This explains why Niall Ferguson was hush about George Bush’s credit fueled war on Iraq, and presumably the lovely occupation thereafter. But when debt finances education or infrastructure; food stamps or healthcare; unemployment relief or development aid we must “be cautious of inflation and slower growth”. Thereupon we develop Fergusonian Inequivalence: Ricardian equivalence, by economic law, holds only for peacetime debt. What?

In fact, contrary to his initial claim, the whole damn chapter is about the benefits of imperialism. But here’s a little secret: just like everyone can’t run a trade surplus, everyone can’t imperialize the shit out of other countries. In fact, Niall Ferguson’s sex relationship with imperialism is deeper than at first glance. Through the book, he pays tribute to the responsible “capital accumulation” that so helped 19th century European economies. However, as we know from John A. Hobson, one of England’s best economists, imperialism is the direct and necessary outgrowth of such accumulation. Capitalists could no longer finance sufficient profit on domestic consumption alone, requiring large and bountiful export markets. Furthermore, domestic industry would no longer require capital at a rate commensurate with high profits, which would need to be invested somewhere. Contrast, hence, the domestic versus national products of British colonies to see just what Niall Ferguson believes was a good thing.

I would devote paragraphs to every other flaw within, but then I’d have to write something longer than the book itself, so here’s a good summary:

- Ferguson repeatedly invokes Edmund Burke’s “partnership of the generations” in the context of fiscal irresponsibility. He’s also delighted about the failures of the “green fantasy” and more than once criticizes those worrying about degrading the “environment”. I will leave it as an exercise to the reader to find the irony.

- He keeps talking as if central banks should control something called “asset price inflation”. First of all, huh? Second of all, we tried that once upon a time. It’s called the “gold standard”. Remember how that went? (If you need a history lesson, take a look at Europe today)

- He talks a lot about America’s broken regulation system and how it’s “consistently” beat by Hong Kong. Yes.

- He cites World Bank Ease of Doing Business indicators, and yet fails to tell the reader – shock! – that the only three countries ahead of America (Singapore, Hong Kong, and New Zealand) have a population the size of less than New York. He has no problem, however, citing Heritage’s far more subjective “Economic Freedom” index which places America far behind. By the way, Heritage is a far more scholarly organization than the World Bank, folks.

- He tells us in a footnote that Iran’s appearance on IFC’s “Doing Business” report “is a reminder that such databases must be used with caution”. So apparently his only inclusion criteria for “further consideration” is the Boolean variable “is this country part of an Axis of Evil”.

- As Daniel Altman has suggested, British legal institutions may not be all that great.

- He consistently wants to confuse the reader with stocks and flows. Sometimes China is this amazing story of incredible growth of which America should be afraid. He devotes pages and pages to the West’s relative decline against the “Rest”. But suddenly against a purported counterpoint to his argument regarding state capitalism, he also notes that America is way more productive than China.

- On this note, he waxes eloquent about the “Rest’s” amazing sovereign wealth funds valued in the trillions. On the same page, he talks about the ills of state capitalism. Huh? Where exactly does he think China and Saudi Arabia get their money from? Selling software?

- For that matter, he talks about the “artificial” purchase of American debt from China keeping American interest rates unfairly low. What exactly does he think China should do with its trade surplus? Burn it?

- (A retrospective edit from my notes). He so dismissively passes off Steven Pinker’s claim that violence has decreased over time, saying he hasn’t seen “statistics”; does he know his colleague has written a 700-page book about the subject? Also brings doubt to his whole “this is peacetime” thing.

- He devotes a whole chapter to the erosion of civil society. Beneath the veneer of an actually valuable point is an argument that we should replace progressive taxes with volunteerism. Yeah, okay, that’s new. But anyway, an overwhelming portion of civil charities, believe government provision of goods and services to the poor is complimentary to their job.

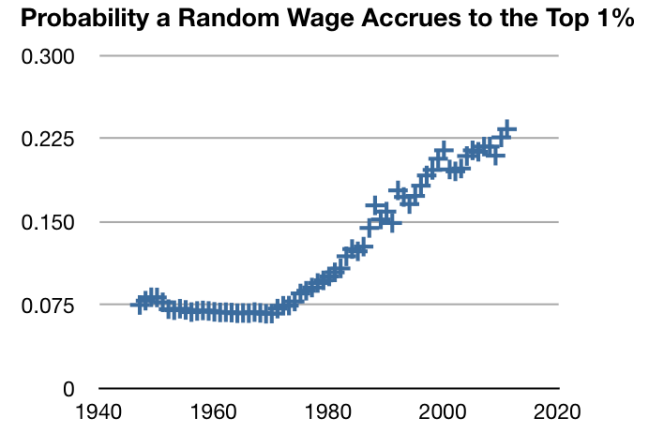

- He bemoans the fact that even if the average donation amount has increased, the number of people who donate has fallen. This bothers me too. Except I don’t call it the battle between “Civil and Uncivil Societies”. I call it “inequality”. As the divergence between mean and median wages continues, this is to be expected. That is by definition the result of an increase in relative poverty.

But nothing so far comes close to measuring the deepest flaw in this book, and indeed his worldview. The whole argument behind his “fall of the West” thesis comes from relative decline. Indeed, the first graph in the whole book shows the historical ratio between British and Indian per capita income levels. Maybe the recent fall in that ratio bothers neocolonialists like Niall Ferguson, but to me it is empowering. To me it is the amazing comeuppance (as pointed out by commenter Julian, I’ve used this word incorrectly) rise of my country – which, mind you, was suppressed for so long by his. I would not make it personal if he shared the accepted, and indeed correct, view that colonialism was largely extractive, with a few piecemeal benefits on the side.

Let me tell you, Niall Ferguson, that I will not be happy until the damned ratio in your primary graph falls to, and below, one. What you see as the fall of some stupid and glorious ideology is what over three billion people of this Earth see as the final coming of dignity and prosperity.

As an American I share with you the concern about decaying institutions. But I’m looking at pictures of Detroit and New York City after Hurricane Sandy. As a true believer in market institutions I am looking with concern, but full understanding, at the Occupy movement. I am looking at a world which now mistakes America as a warmonger and projects onto us a mantle you wear so elegantly: “imperialist”.

There are different levels of argument. There is that of fact. Then of analysis. And then of meta-analysis and beyond. Debunking this book requires little more than the first. For it is nothing more than the whimper of a dying idea.